Spatialized audio refers to sound that appears to come from a particular direction in 3D space. It is one of several inputs that combine to create a sense of immersion for the user within the VR environment. The Daydream SDK comes with a suite of robust components that allow us to effortlessly simulate the complex behavior of sounds in the real world.

Although the behaviors and underlying concepts behind spatial audio are quite complex the audio components provided by the Daydream Unity SDK are very easy to use. For more information on spatial audio check out this detailed explanation on the Daydream website.

This tutorial was built using this version of Unity 5.4.1 -GVR13 Technical preview with version 1.1 Daydream Unity SDK. See my tutorial here on setting yourself up for Daydream development and my intro tut here on building a base scene with a controller and teleportation.

Directional Audio

I’ve created a fully working Unity project with samples of the Daydream audio components. Download it from here and have a poke around in the SDKBoyTut scene. You’ll see I’ve added some sounds to the project in Wav format and created a scene with GvrAudio components attached to various gameObjects scattered about the environment. There’s also an example of the Room Audio component. Run the project on your phone with headphones and teleport around to get an idea of how spatialized audio works.

Plugin Setup

To get started using the SDK audio components you need to first select the GvrAudioSpatializer as a plugin.

- Edit > Project Settings > Audio

- In the Audio Manager inspector select GvrAudioSpatializer in the Spatializer Plugin drop-down menu.

Audio Listener

To be able to hear sound from a 3D audio source we need to add a GvrAudioListener to a gameObject. I’ve added this to the player’s camera since this is our source.

Audio Source

The next component we’re going to look at is the GvrAudioSource. This component provides sound from a particular point in space. We add the component to a gameObject which is then placed in a location in the scene. When wearing headphones or on a surround sound system the audio appears to come from the direction where the component is placed in 3D space.

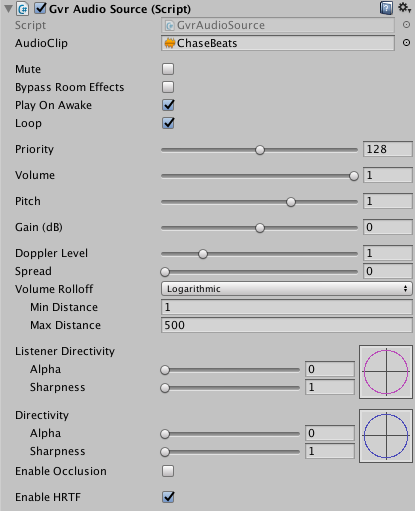

- To get it working simply add a new gameObject to the scene, say a sphere, and in the sphere’s inspector click “Add Component” and choose GvrAudioSource.

- In the AudioClip field drag and drop an audio file from your project. Unity accepts AIFF, WAV, MP3 and Ogg formats.

- I’ve toggled the Loop and PlayOnAwake booleans to true and left most of the other properties to the default.

One of the other more useful properties on the component is the VolumeRollOff which controls the max distance the sound can be heard from and the way the sound’s volume is reduced over distance: Logarithmic or Linear. Also toggle Enable Occlusion if you want the audio to be muffled behind solid objects. This is what my component looks like:

Room Audio

The next component we’re going to look at is the GvrAudioRoom component. As the name suggests it mimics the audio effects of a walled room. This component doesn’t actually produce any sound but instead handles reverb and muffles sound through the room walls.

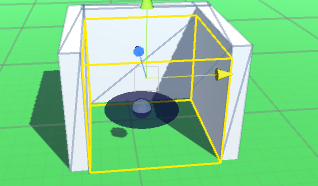

- Add the GvrAudioRoom component to an empty gameObject. In the demo, I’ve built some simple walls out of cubes to represent the room.

- The first thing you’ll notice after adding the Audio Room component is a yellow wireframe cube. This represents the size of the room. Scale this box using the Size properties in the GvrAudioRoom component.

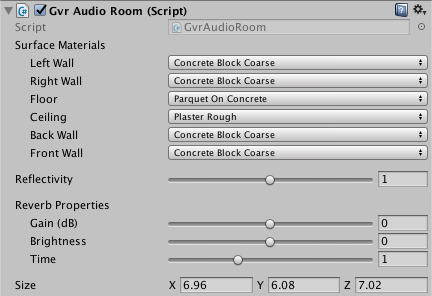

- By itself the Audio Room component doesn’t produce any sound, you’ll need to add gameObject with a GvrAudioSource and place it inside the room. My Audio Room inspector looks like this:

The GvrAudioRoom gives you complex control over the types of surface material the walls are made out of, things like concrete, glass, water, and plaster. We can also tweak the way sound echoes within the room through the reflectivity property and the reverb’s gain, brightness and delay time.

Sound Field

GvrAudioSoundfield allows multichannel ambisonic directional audio that responds to head movement. Being a large and complex field Ambisonics is beyond the scope of this tutorial, however much of this complexity has been abstracted out by the Audio Sound Field component. The Sound Field works with audio in a similar way as a directional light works with lighting. Sound comes from a specific direction no matter what your location. For this reason, it works well with 360 videos where there is no translational positional movement, just rotation. To use the GvrAudioSoundField component for accurate directional sound you’ll need a multichannel ambisonic file. There is a limitation in Unity that only allows for two channels of audio with ambisonics, not the usual four. So you’ll need to merge your audio files into two using your preferred audio editing software. I’ve found the component also works with standard audio files if you wish to have a constant ambient sound coming from a particular direction.

Spatial audio is a powerful tool in the toolkit of VR developers that adds an awareness of the surrounding and creates emotional impact. The suite of Gvr audio components in the Daydream SDK abstract away the underlying complexity of spatialized audio, allowing VR developers to focus on what we do best- build weird and wonderful VR games and apps.

Disclaimer: I’m a Google employee and write blog posts like this with the sole purpose of encouraging and inspiring developers to start exploring Google’s amazing Daydream VR platform. Opinions expressed in this post are my own and do not reflect those of my employer. I would never share any secret or proprietary information.

SU

Thanks for this! Doesn’t the Gvr Audio Source also require an Gvr Audio Listener component to be heard?

@_SamKeene

@SU You’re absolutely correct. In my 3 AM haste to complete the tut I skipped a step (although it is in the demo package 😉 ). Updated, thanks for the catch!

SU

You’re welcome! This article really helped me set up my first spatial audio and the results blew me away. Thanks for another great tutorial! Much appreciated.

Sanket Satish Dongre

Hi, I have a query.. Can we use spatialized audio for building Cardboard applications? For an android api 4.4 using Unity Daydream Technical Preview?

@_SamKeene

Hi Sanket, I’ve never tried but I don’t see why not. Give it a go and let me know if it works. More info on Google VR spatialized audio in Unity here: https://developers.google.com/vr/unity/spatial-audio